Blog and Wiki Posts

Site Activity

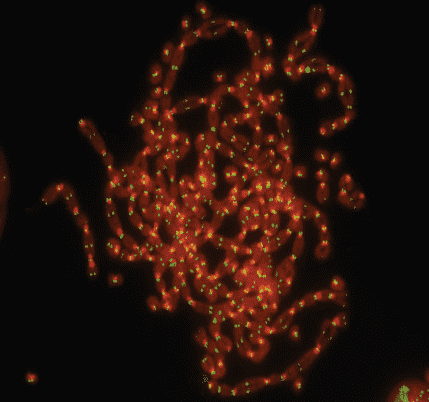

It is quite evident that exosomes play a significant role in programming the cellular age. However, it is uncertain whether they contain anti-aging factors.

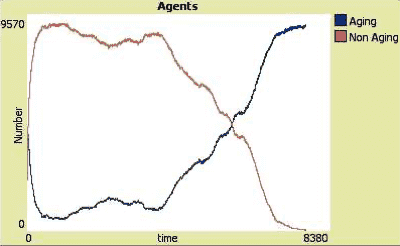

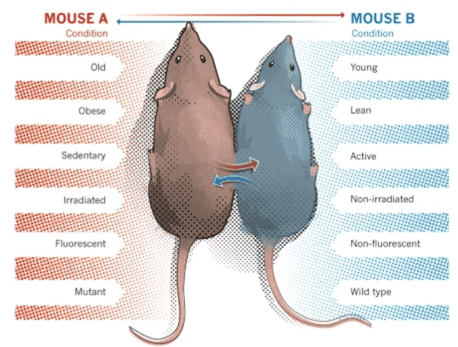

In order to maintain homeostasis, multi-cellular organisms attempt to keep the concentration of each component at a specific level. Therefore, adding exosomes from young animals might reduce the number of exosomes with pro-aging factors which explains the rejuvenation effects measured in both studies.

It is highly likely that exosomes with pro-aging factors exist due to the fact that senescent cells also release exosomes.

Mike Best commented on The evolution of aging 7 months, 3 weeks ago

I heard a while back the Conboys were doing a study now where they will also record the epigenetic age after treatment. I doubt I can find it again but in China they claimed they got about a 20% reduction in methylation age on some organs with plasma dilution on mice. I still think the exosomes probably have a bigger effect but diluting first might give a little more effect possibly.

Hi Mike, thank you for sharing the article. I found it to be quite interesting. However, I didn’t notice any mention of the removal of extracellular vesicles from the blood.

It’s unfortunate that neither of the papers discussed the objections of leading scientists in the field, such as Conboys. According to their research, dilution of pro-aging blood factors leads to rejuvenation. To confirm this, instead of injecting a saline solution in the control group, the researchers should have checked whether plasmapheresis leads to the same rejuvenation effects.

On a separate note, I found the in-vitro experiment with young extracellular vesicles in the Nature paper to be quite intriguing. I wonder how the Conboys would explain this effect.

Mike Best commented on The evolution of aging 8 months ago

https://www.nature.com/articles/s41598-023-39370-5

Here is that link to that study. I also remember a paper that just focused on the muscles and it claimed young EVs increased Klotho and some other things there.

Thanks for the link. For some reason, this paper did not appear in my channels. I am pretty disappointed. It seems Katcher’s miraculous E5 is nothing else than the exomes. I doubt they will get a patent for a procedure that does nothing but extract the exomes.

Do you have a link to the second paper you mentioned?

Mike Best commented on The evolution of aging 8 months, 1 week ago

https://www.biorxiv.org/content/10.1101/2023.08.06.552148v1.full

It appears Katcher’s group has revealed its mostly the exosomes in the young pig blood that is reversing the epigenetic age. There was also another study recently that said when they removed the extracellular vesicles from the blood they didn’t get the beneficial effects they did when they remained in the transferred young blood. It would lead you to think this should work for people also and give a big healthspan effect with some lifespan extension.

Michael Pietroforte commented on When will AI surpass Facebook and Twitter as the major sources of fake news? 11 months, 1 week ago

Rodd, I am afraid there is a big misunderstanding here. It is my fault, I should have made this clear in the article. I worked a lot with neural networks for my thesis which made me assume that this is self-evident. In discussions on Reddit, I noticed it is not

It is crucial to comprehend that the inaccurate responses from ChatGPT are not related to online misinformation. Some individuals may find it hard to accept this fact since we generally associate computers with processing data and not generating it. However, neural networks function in a distinct manner than the symbolic systems we have been dealing with so far.

Take the example I discussed in the article. You’ll not find any web page that claims that I was MVP for Windows Server or PowerShell and no site exists where information about my demise has been published.

During the learning process, neuronal networks simply identify statistical correlations in the training data. In this specific case, ChatGPT discovered various websites that mentioned my role as the founder of 4sysops and as an MVP. However, due to the abundance of content regarding Windows Server and PowerShell on 4sysops and the existence of many MVPs in this field, ChatGPT erroneously assigned a high probability to the incorrect information that I was an MVP for Windows Server and PowerShell. Such a mistake would not have been made by a professional journalist.

The second example further highlights the issue at hand. There is another person with the same name as mine, who you can easily find information about on the internet. To my knowledge, he was a decorated soldier and has no connection to the field of IT. A journalist would not make the mistake of confusing us, as it is quite clear that we are two distinct individuals.

ChatGPT does not understand the meaning of the text that it is trained with. It only relies on statistical correlations between symbols without knowing their meaning. To improve accuracy, OpenAI needs to expand the connections in the neural network. However, this can be a challenge as the required computing power increases exponentially with the number of parameters.

This is what OpenAI essentially did when they upgraded from GPT-3.5 to GPT-4, which reportedly utilizes 1 trillion parameters compared to the former’s 175 billion. And now look at the slight increase in accuracy despite such a significant change in parameters. Furthermore, it seems that all GPT-4-based services are currently limited in the number of prompts per user due to computational power constraints, as even Microsoft’s Azure may not be equipped to handle the load properly. Given these limitations, I am doubtful that we can expect significant improvements in accuracy anytime soon.

Rodd commented on When will AI surpass Facebook and Twitter as the major sources of fake news? 11 months, 1 week ago

Do you think we will ever reach a point when AI will be reliable, given the amount of dissinformation available to the AI in which it draws its conclussions from?

Conspiracy theorists often state that one sure way to cover up a conspiracy is to saturate the event with mass amounts of disinformation on the topic. How then can we be sure that the answers/information provided by AI is accurate and reliable if the information it uses is flawed ?

Personally, I agree it is usefull but unreliable unless AI engineers can find a way to filter out disinformation, which then may introduce bias?

Michael Pietroforte commented on When will AI surpass Facebook and Twitter as the major sources of fake news? 11 months, 1 week ago

Yeah, neural networks have the same weaknesses as human brains. Even though the neural network runs on a computer, you can’t rely on its computations. Some researchers will say that this is a feature, not a bug because this is why neuronal networks can perform tasks that symbolic systems cannot.

Tony Pieromaldi commented on When will AI surpass Facebook and Twitter as the major sources of fake news? 11 months, 1 week ago

As a test I asked ChatGPT to multiply the same two very large numbers together four times and got four different answers.

Question (asked four times in succession):

what is 487589112024886 x 65866212482446The product of 487,589,112,024,886 and 65,866,212,482,446 is:

ChatGPT Ans 1 32,102,954,111,984,200,000,000,000,000

ChatGPT Ans 2 32,110,286,529,493,600,000,000,000,000

ChatGPT Ans 3 32,102,596,610,474,400,000,000,000,000

ChatGPT Ans 4 32,110,986,153,361,700,000,000,000,000Will the real number please stand up!

Michael Pietroforte commented on When will AI surpass Facebook and Twitter as the major sources of fake news? 11 months, 1 week ago

The issue isn’t that AI encounters inaccurate information online. Even if the sources provide completely accurate information, the response generated by the AI can still be incorrect. The article discusses an example where ChatGPT mistakenly mixed up two individuals with identical names. This mistake would never have been made by a human reader.

s31064 commented on When will AI surpass Facebook and Twitter as the major sources of fake news? 11 months, 1 week ago

Personally, I find nothing surprising about this. I’ve been in the IT field for over 40 years. While most of the IT world relies heavily on their “Google-Fu”, I’ve found it to be inconsistent and variously inaccurate. No surprise then that an AI program has trouble distinguishing fact from fiction. Humans, whose brains are the ultimate computers, are subject to false memories which can be caused in multiple ways, including something as simple as being repeatedly exposed to inaccurate information. When you consider the abundant amount of inaccurate information everywhere on the web, it’s really no surprise that an AI program can’t tell the difference between fact and fiction Remember, the A in AI stands for Artificial, in other words, not genuine.